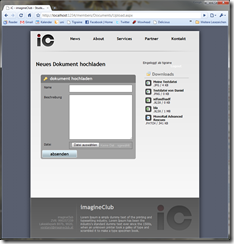

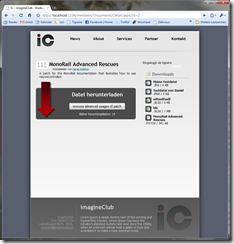

Some time ago while writing the CSS for the ImagineClub website I found out the hard way that there are two ways of developing XHTML sites: Clean presentation/markup separation or mixing of the both to achieve CSS reusability.

What I mean by mixing is markup code like the following:

<div class="floated thick-border highlight">

<p>Stuff</p>

</div>

I have problems with the above, since I am clearly mixing presentation with data. I want my XHTML to transport structured data that gets styled through CSS. Separation of concerns teaches us that we should rarely have to touch the markup if we want to change appearance, and we should not have to touch the markup if we change the data we are presenting.

So the above code clearly blurs the line somewhat, and while still being somewhat semantic markup, it’s also intermingled with presentation concerns what I don’t like at all.

Why code like the above exists has a reason: CSS is endlessly verbose and leads to tons and tons of code-duplication if only applied to DOM structures and IDs, so naturally webdesigners have started to use the mixing of classes in markup to avoid some of the duplication while still leveraging the power of CSS.

During a chat with Kristof about this particular issue the conclusion we reached was that my way of doing it was theoretically better, but only if backed by some sort of server-side framework that would enhance CSS to avoid duplication and verbosity that comes with my approach.

And looking over the Microsoft fence, somewhere in those fluffy green lands inhabited by Ruby people, I found the answer to my problems: LessCss!

But I don’t do ruby development so I filed it away under “cool but unreachable”, until I came across this tweet some two weeks ago:

I was immediately sold to the idea and contacted Erik, to contribute to the project. Turns out, he’s a really nice guy and working hard on writing a parser to read LessCss fully in managed code through the use of ANTLR.

But being the simple guy I am one of the first questions I had for Erik was: “Why don’t we just wrap the original Less project inside the DLR and run it from there?”.

Well, at that time Erik had no real answer for that, and I didn’t either so I decided to give it a try while Erik had some very valid reasons to continue working on a full C# implementation.

And now this is the post to tell you of my pyrrhic victory:

First: I did it. It’s here and it can be used: IronLess.Net.

Disclaimer: It’s a pain in the ass to use.

Installing IronLess.Net

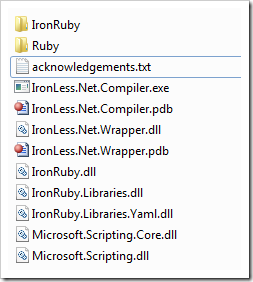

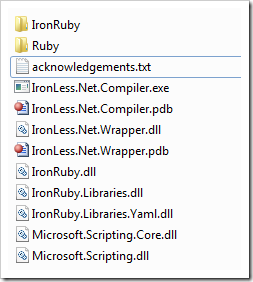

When I write a library I want it to be one thing: self-contained. I don’t want to mess with your local IronRuby installation or with your current gem setup on the machine. So IronLess comes packed with a full catalog of IronRuby/Ruby class libraries all packed into a 0.9mb 7zip file. This file contains 2.228 files in 482 folders all together forming the complete IronRuby environment needed to run the original LessCss.

I did some (simple) magic with NAnt to alleviate that pain, so if you checkout the code you’ll just have to run build.bat and NAnt will compile IronLess and also extract the IronRuby libraries to your build folder, making it completely self-contained. You’ll end up with the following folder structure you just need to move (copy will take forever) to your /bin folder:

Once that’s done you only have to add the following HttpHandler to your ASP.NET web.config and add some initialization code to your Global.asax.cs to be all set.

web.config

<add verb="*" path="*.less" type="IronLess.Wrapper.IronLessHandler, IronLess.Wrapper" validate="false"/>

Global.asax:

protected void Application_Start()

{

IronLess.Wrapper.RubyEngine.Initialize(Context);

}

That should suffice to redirect all request for a .less file to the IronLessHandler that will compile .less to .css using LessCss.

Downsides:

There are a thousand reasons to use this, but I’ve another thousand why you shouldn’t:

- Startup is painfully slow: Initializing the LessCss ruby script takes >20 seconds. So every application start takes 20 seconds now since we call the RubyEngine initializer in Application_Start (that will kick off the init of the LessCss script that itselfs makes IronRuby parse all the imported libraries resulting in a 20 second library load). That itself makes it completely unbearable since every debug run in Visual Studio now takes 20 seconds to load.

- LessCss through the DLR can’t read windows line-endings. You have to open up your.less file in a editor like Notepad++ and convert it to UNIX style endings (CR-LF -> LF). Not pretty and even less practicable.

- Error handling / debugging is impossible. I didn’t dare to modify the LessCss.rb script so errors will be outputted to the command-line that you aren’t seeing. So if your .less file has errors you’ll see no useable results on why it failed to load.

- Compilation of .less to .css takes between 50 to 200ms inside a running web-app. Running the IronLess.Compiler takes about 30 seconds. Both figures are way to slow to be actually useable, going with the native Ruby gem from the commandline would be much faster.

So, why bother?

Actually, that’s the question I asked myself halfway through doing IronLess. Since it’s so painful to deploy and startup, I don’t see any real use for this at this point. If someone has the skills to make the DLR run LessCss faster than light by flipping some magic bit, please go ahead and fork my repository on github and tell the world about it.

Also I find the installation process to be just too painful. C’mon, 2.228 files in the /bin directory just to write CSS just isn’t cutting it for me. What I want is one simple dll I reference from /lib and I’m set.

Going further

I’ll be going to help Erik get Less.Net out of the door as quickly as possible, in the hopes of bringing something much needed to the ASP.NET world while avoiding all the troubles with external dependencies you get when trying to call into the ruby world from .NET code.

Also it’s a nice excuse for me to dig into ANTLR.

So finally, if you decide to use this you are entering a world of hurt. Either you can improve IronLess to a point where it gets useable (I can’t), or you wait for Less.Net.

Isn’t that awesome? I speak German and can’t tell you what they could possible mean with words like “

Isn’t that awesome? I speak German and can’t tell you what they could possible mean with words like “